One of the central tasks in Bayesian Machine Learning is to compute the posterior distribution given data and prior. One of the popular techniques is Variational Inference. However, in its batch setting, it is often not scalable to large datasets. Fortunately, it can be done in a stochastic setting as well.

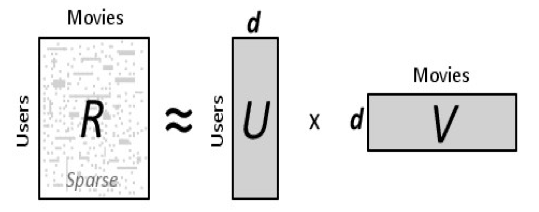

As a part of the project, we did a literature survey of Stochastic Variational Inference (SVI) methods. We also formulated and implemented an SVI version of Hierarchical Poisson Matrix Factorization [Gopalan et al., 2015], while the paper only had batch VB (Variational Bayes) updates.